Explainable AI in Demand Forecasting: Building Trust When Stakes Are High

Demand forecasting has always been a tightrope walk. Planners are working with imperfect data, changing customer behavior, promotions that distort demand, supply constraints, and constant pressure to protect service levels without tying up too much cash in inventory. Over time, Advanced analytics and AI have helped teams forecast more accurately, respond faster, and make decisions at scale.

But as forecasting models get more sophisticated, a new problem shows up: the forecast might be “right,” yet still hard to trust operationally.When the numbers suddenly shift or the system recommends a meaningful change in production or inventory – teams naturally ask why before they act. And when service, revenue, compliance, and customer commitments are on the line, trust matters just as much as accuracy.

Why Accuracy Alone Isn’t Enough Anymore

Traditional forecasting often leaned on straightforward logic: historical averages, simple seasonality, rules of thumb, and planner experience. Those methods weren’t perfect, but they were easy to explain. You could usually point to a reason: a seasonal lift, a recent sales trend, a known customer event.

Modern AI can look at hundreds of signals at once. It finds patterns humans might miss, connects data across channels, and adapts continuously as conditions change. This is powerful, but it can also feel opaque to business users.

So when the model raises demand by 12% for a specific SKU, planners start asking practical questions:

- Is this increase because sales are accelerating or because of a promo?

- Is this a stable pattern, or will it swing back next week?

- How confident should we be before we commit inventory, capacity, or spend?

If the system can’t answer those questions clearly, people hesitate. They override the forecast manually, delay decisions, or rebuild the plan in spreadsheets “just to be safe.” Over time, this erodes confidence in the system, even if the model itself is statistically sound. The core issue often isn’t performance. It’s interpretability and trust.

Why Accuracy Alone Isn’t Enough Anymore

Explainable AI doesn’t mean showing planners algorithms or math formulas. In a business setting, explainability means the forecast is understandable, actionable, and defensible.

In demand planning, that usually looks like:

- Visibility into the key drivers behind a forecast change

- Clear flags when a forecast deviates from normal patterns

- Confidence signals that indicate how aggressively to act

- Traceability from the forecast back to the underlying data signals

Instead of getting a number with no context, planners see what’s influencing it—demand acceleration, channel shifts, changing seasonality, customer behavior, or external disruptions. That makes it easier to apply professional judgment in the right way, without blindly trusting the system or rejecting it outright.

Explainability also improves collaboration across functions. Finance teams want to understand revenue implications. Operations teams need confidence before committing capacity. Leadership needs clarity when making strategic decisions. A transparent forecasting system aligns these conversations around a shared view of what is happening and why.

How Explainability Changes Day-to-Day Planning

When forecasting is explainable, the daily conversation changes.

Teams spend less time debating whose number is “correct” and more time discussing what the signals mean and what to do next. Instead of “Do we trust this forecast?” the question becomes: “What’s changing—and how should we respond?”

Planners can validate shifts faster, prioritize exceptions more effectively, and act earlier rather than firefighting later. Over time, that builds confidence not just in the model, but in the entire planning process.

A Simple Real-World Example

Imagine demand for a critical SKU starts creeping up in a specific region. It’s gradual enough that traditional weekly reporting doesn’t make it look urgent. Everything still appears “within range.”

An AI model, however, detects a consistent shift across order frequency, channel mix, and customer behavior. It increases the forecast and assigns a moderate confidence level.

With explainability built in, the planner can see the rise is being driven mostly by repeat orders from a specific customer segment, and not a one-time spike. The confidence signal shows the pattern has held across multiple cycles.

That context makes the decision easier: adjust replenishment early, coordinate with sales, and prevent shortages without overreacting. The action feels informed, not speculative.

Why Trust Matters Even More When the Stakes Are High

In industries like pharma, FMCG, manufacturing, and regulated supply chains, the downside of getting it wrong is significant.

- Overproduction ties up working capital.

- Underproduction risks service failures and lost revenue.

- Compliance requirements demand auditability and consistency.

In these environments, explainability isn’t a “nice to have.” It’s often the difference between AI being adopted at scale, or being used cautiously by a small group while everyone else works around it.

When AI systems are transparent and traceable, leaders can defend decisions internally and externally. And the organization can expand usage confidently across cycles, regions, and product lines.

How SpectraONE Approaches Explainable Forecasting

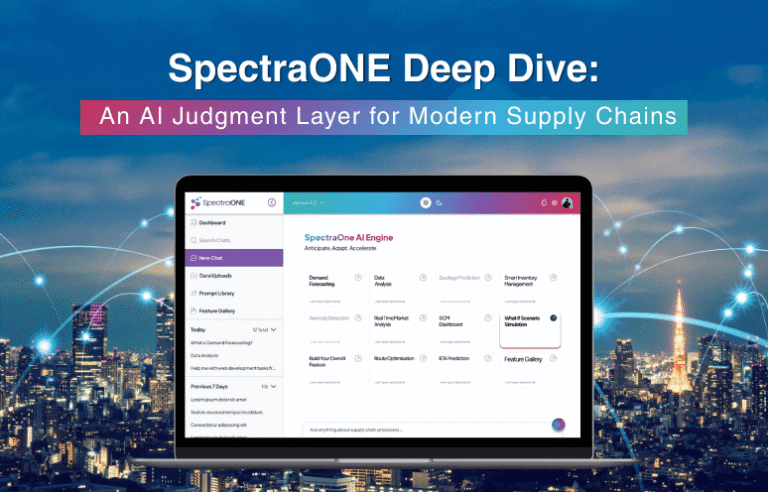

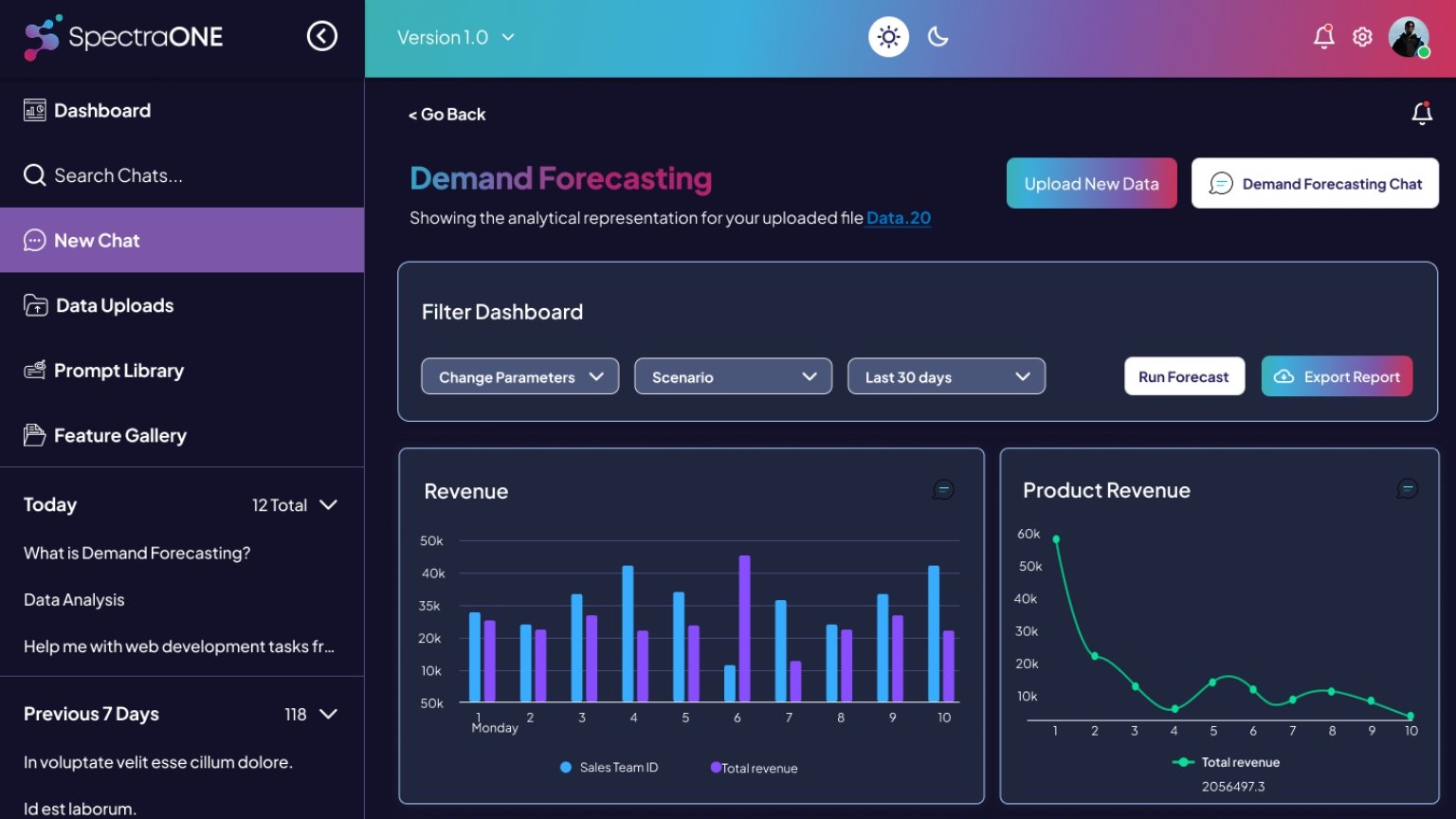

SpectraONE is built to make explainability part of the workflow, not an add-on.

It connects demand, supply, inventory, production, and logistics into a unified intelligence layer. Forecast outputs come with driver context, confidence indicators, and early signal detection – so teams can see what’s changing, what’s driving it, and how reliable it appears.

The goal isn’t just “better numbers.” It’s better decisions: faster alignment, clearer financial visibility, and more confident execution under uncertainty.

Looking Ahead

As AI reshapes supply chain planning, the big question will increasingly shift from:

- “Can the model predict accurately?”

to - “Can we trust it—and operationalize it at scale?”

Explainable AI is what bridges that gap. It helps planners and leaders understand the “why” behind the forecast, apply judgment with confidence, and build resilience into daily decisions.

The real value of AI in forecasting isn’t only better predictions, it’s clearer reasoning, better alignment, and more confidence when it matters most.